Let’s say a user asks you to create a flat file output from a database query. Piece of cake. Open SSMS, write the query, run it and copy the results (with headers using SSMS 2008) into a text file. Send the user the file and and you’re done with time left over for a siesta.

But oh wait—they want to be able to open it in an older version of Excel, which will only display ~ 65k rows per sheet. This file has 190k rows. So they ask you to write the output to multiple files, limiting the total rows per file to 60k.

Now what?

You could simply open the raw flat file, go to line 60,001 and cut-paste lines 60,001–120,000 into another file and repeat as necessary. That’s ok for a one-off task but not a good strategy if you are going to be doing this several times. It can be cumbersome and error prone to manually create several files this way.

No—the better approach is to make SSIS do the heavy lifting. I had to do this recently and thought I’d share my solution.

Here is how the process roughly breaks down:

- Pull the data from the source.

- Stage the data.

- Use the number of records returned and the max number of rows I want in each file to determine how many files I’ll need.

- For each file I need, run through a loop, figure out which rows go into the current file, give the file a name and write the rows to the file.

There are a few other details to cover too, like how to name output files and read from write to variables. We’ll look at all of that.

Package Variables

For this package I set up the following variables:

I’ve hidden the scope in this screen cap. They are all in the package scope.

Package Overview

And here is what the package looks like. It’s bare bones simple.

Create Staging Table

I drop and create the staging table from scratch each time. It contains an identity field and I want to make sure I’m always starting with a seed value of 1. I could handle this differently but this method is simple and works reliably.

Stage the Data

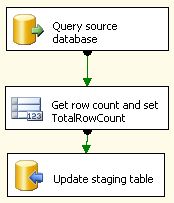

Next, I stage the data. That Data Flow task looks like this:

I query the source database, get the number of rows returned so I can update the TotalRowCount variable, then update the staging table.

Determine Number of Files to Write

For this particular request, the user wanted a maximum of 60,000 rows per file. I don’t show it above but the MaxRowsPerFile package variable value is set to 60000. The logic to determine number of files needed is simple:

NumFiles = Ceiling(TotalRowCount / MaxRowsPerFile)

So if the process returned 190k rows and we only wanted 60k per file:

190,000 / 60,000 = 3.16 Ceiling(3.16) = 4 NumFiles = 4

Pretty basic.

Here is the C# code to do that and set the NumFiles package variable:

Remember to set your ReadOnlyVariables and ReadWriteVariables variables appropriately in the Script Task Editor:

At this point we have pulled our source data and put it into a staging table. We know how many total rows we have. And we know how many files we will be writing. Now we need to select from the staging table and write the results to output files.

The For Loop Container

In a For Loop Container, you create some kind of condition. The loop will execute until that condition is met. I use the NumFiles variable to control how many times I run through the loop. To do this, I used the LoopCounter variable and the NumFiles variable. I start with LoopCounter = 1 and I increment the LoopCounter each time the loop executes. The loop runs while LoopCounter <= NumFiles. If I’m creating 4 files, the loop executes 4 times. Here is how the loop is configured:

The @LoopCounter and @NumFiles variables reference package variables.

Inside the loop we have to do a few things:

- Determine which rows are going to be selected for output.

- Name the output flat file.

- Select the right data.

- Write the data to the flat file.

Calculate Which Rows to Select

How can I control which rows to select? Remember above I wrote that the staging table has an identity column and it is guaranteed to start at 1 each time I run this process. I called this field RecordNum. This means I can qualify my query using the RecordNum.

To do that, I’ll need to figure out which row to start on and which to end on each time I go through the loop. I’m going to refer to that as the window and it’s defined by two package variables: LoopWindowStart and LoopWindowEnd. The logic behind calculating the window is:

LoopWindowStart = (LoopCounter * MaxRecordsPerFile) - MaxRecordsPerFile LoopWindowEnd = LoopWindowStart + MaxRecordsPerFile

Let’s use the same numbers again and say I only want 60k rows in each file.

The first time through the loop my window looks like this:

LoopCounter = 1 MaxRecordsPerFile = 60,000 LoopWindowStart = (1 * 60,000) - 60,000 LoopWindowStart = 0 LoopWindowEnd = 0 + 60,000 LoopWindowEnd = 60,000

The 2nd time through:

LoopCounter = 2 MaxRecordsPerFile = 60,000 LoopWindowStart = (2 * 60,000) - 60,000 LoopWindowStart = 60,000 LoopWindowEnd = 60,000 + 60,000 LoopWindowEnd = 120,000

The 3rd time through:

LoopCounter = 3 MaxRecordsPerFile = 60,000 LoopWindowStart = (3 * 60,000) - 60,000 LoopWindowStart = 120,000 LoopWindowEnd = 120,000 + 60,000 LoopWindowEnd = 180,000

And you can guess what the 4th time looks like.

But won’t there be overlapping values?

No. And you’ll see why below. But first, here is the C# script that shows how we set the LoopWindowStart and LoopWindowEnd variables:

Again, don’t forget to set the ReadOnlyVariables and ReadWriteVariables.

Name the Output File

I created a Flat File Connection in the Connection Manager. (Hint: always create a sample file first with 1 row and create your flat file connection by pointing it to the sample. That way you don’t have to create the entire definition in the connection editor, which is about as appealing as getting jabbed in the eye with a rusty can opener.) In the flat-file connection properties, I used the Property Expression Editor to set the ConnectionString.

When I created the package initially, I set the HomeDir variable to point to my final output directory (including a trailing slash). The HomeDir might look like C:\output\. (Like the MaxRowsPerFile variable, I set this at design-time. It’s not something I set through scripting.)

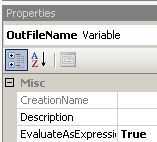

OutFileName is a variable but we haven’t set it through any scripting. So how does it get set?

Go to the property editor for the variable and set EvaluateAsExpression to True.

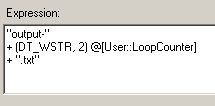

Then go into the Expression Editor and use a combination of literal strings and the LoopCounter to create the filename:

Your outputfiles will evaluate to:

output-1.txt output-2.txt output-3.txt etc...

Each time through the loop then you will be writing the following files:

C:\output\output-1.txt C:\output\output-2.txt C:\output\output-3.txt etc...

Select Output Data and Write to Output File

Here we need to select specific rows to bring into the Data Flow. That Data Flow task looks like this:

The staging table query uses the LoopWindowStart and LoopWindowEnd variables. There are two parts to this. The first is to write the query with the parameter placeholders:

Notice how I’m using WHERE RecordNum > ? with AND RecordNum <= ?. This is why the records won’t overlap. Say I have 190k records and will be writing to 4 files. This means 4 times through the loop and it means 4 windows. Here is how things will look.

1st Pass through loop:

LoopWindowStart = 0 LoopWindowEnd = 60,000 WHERE RecordNum > 0 AND RecordNum <= 60,000

Records 1 - 60,000 output to c:\output\output-1.txt

2nd Pass

LoopWindowStart = 60,000 LoopWindowEnd = 120,000 WHERE RecordNum > 60,000 AND RecordNum <= 120,000

Records 60,001 - 120,000 output to c:\output\output-2.txt

3rd Pass

LoopWindowStart = 120,000 LoopWindowEnd = 180,000 WHERE RecordNum > 120,000 AND RecordNum <= 180,000

Records 120,001 - 180,000 output to c:\output\output-3.txt

4th Pass

LoopWindowStart = 180,000 LoopWindowEnd = 240,000 WHERE RecordNum > 180,000 AND RecordNum <= 240,000

Records 180,001 - 190,000 output to c:\output\output-4.txt

Pass the variables to the query using the Parameters editor:

This pulls the rows I want into the Data Flow.

The last task simply points the Data Flow to the Output File. That's it.

I'm interested to see if anybody has come up with something better.

(This was a quick SSIS package I threw together to handle a simple request. It lacks error handling and logging and I wouldn't use it like this in a mission-critical scenario.)